[talk about picking

and choosing]

When we hear about mixing, a lot of attention

is devoted to balancing and equalizing. To

understand why these two things are important, we should consider how mixing

works, and how sounds come together in general.

When our brain perceives a sound wave in real life, it is usually not as

pure when heard as it is when produced by its source. In-between, it goes through transformations

due to physical travel of the acoustic energy; it is superimposed and distorted

by in-between sounds coming from other sources.

When we listen to a mixed song, we are hearing two tracks (most

commonly) – one for the left ear, one for the right, a.k.a. stereo. Both of these tracks contain similar sonic

information, incorporating multiple instruments, voices, noises, pulses, and

other parts of a mix. How does all this

seemingly different sonic information come together into only a pair of tracks?

Think of

the most basic property of sound: the overtone series. When a note is played, we hear most

prominently the fundamental frequency (which is that of the note played), and

the overtones, which are multiples (or fractions, depending on how you look at

it) of that fundamental frequency. Each

represents certain characteristics of the Lydian scale; depending on which

characteristics those are and how prominent (loud) they are relative to each

other, the overall tone may be perceived as one instrument or another (or even as

multiple instruments).

When we are

mixing, a similar thing occurs, except that instead of pure harmonics, complex

wave forms are superimposed. So let’s

begin by looking at the superimposition of overtones, and hopefully we’ll get

some insight into how waves interact when they are mixed.

Thus, let’s

talk about some overtone basics, so that we can better judge what effect

various mixing techniques will have.

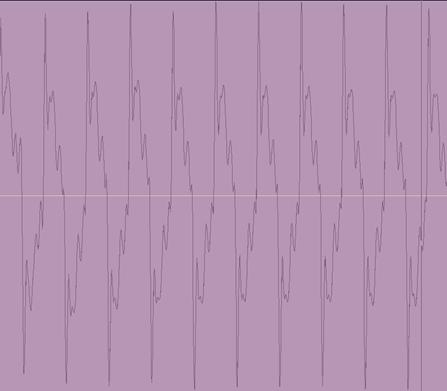

First, we will examine a close series of overtones. Be forewarned, these are guitar-produced waves,

so not all shapes are perfect (also disregard the relative phase). These samples are normalized to demonstrate

the shapes; in reality, the amplitude of each unique overtone.

Notice how

each succeeding overtone, being at a higher frequency than the previous one,

alters the minute detail of the fundamental shape. The smaller the overtone with respect to the

fundamental, the more minute the detail will be. We’ll take a look at what role that plays in

a minute; for now, let’s look at a selection of four fundamental overtones

(from above taking fundamental, fifth, second octave,

and major 3rd):

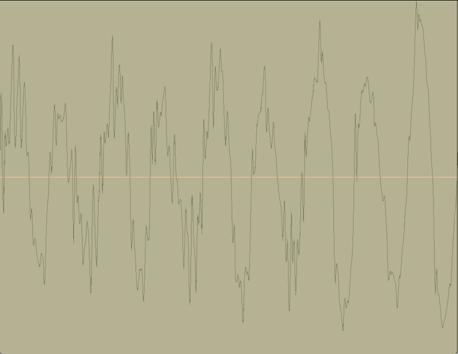

Again,

these are normalized to enable easiest comparison in shape. However, to simulate a real overtone series,

we’ll reduce the volume of each shape: 5th will be reduced -6db,

octave -12db, and major 3rd -15db.

Every instrument produces various harmonic overtones, and as we

discussed in the Recording article, the stages between acoustic energy produced

by an instrument and digital data recorded to represent that energy will color

the tone by boosting or reducing the various overtones that the instrument

produces. This is exactly what makes

different instruments (such as guitars and trumpets) sound distinct from each

other; it is also the property that causes differentiation between various pickups,

microphones, amps, and speakers. However, our aforementioned approximation will

do for this illustration:

So let’s

assume that each of the above four waves is a representation on a graph (which

it is –a decibel graph), and that the zero crossing is a Y=0 position. Now, we’ll add the four “functions”,

altogether as well as in selective combinations. Below are the three representations in

successive superimposition:

Here is

another diagram, of the previous two at scale with each other. We can notice how after each additional overtone

the final shape of the wave becomes molded.

The shape

generated in pale red above is, for our current purpose, a representation of

what some physical instrument might “sound” like. The shape of the wave causes us to

differentiate that particular instrument from any other one the waveform of

whose sound is different enough. The

shapes for many acoustic instruments (especially grand piano or acoustic guitar

are very smooth (like the one we have above); however, other instruments, such

as overdriven electric guitar – not so much.

Let’s consider why this happens.

Suppose we took a pure guitar tone, and ran it through an amp that

boosted the hell out of a particular group of relatively high overtones into

infinity (clarification for modeler users: Mid-Freq=2500Hz; Mid-Gain=+9;

Amp-Gain=80). Next, a relatively low

note is played (e.g. A=220); it determines the overall shape of the sound, as

did the fundamental in our previous example.

The super-boosted high-frequency overtones (for the A in question,

overtone octaves 10-12 – we’re talking dog whistle here) are superimposed. Our end result is a jagged sound wave, that we perceive as noise or distortion.

Here’s a

zoom of an overdriven harmonic, drastically normalized to examine the shape

closer:

Here’s a

zoom on a sound wave of a single-note lead guitar sound (including all

overtones – this is the only one here that’s an actual guitar sound). You may be thinking, “Wow that’s cool! So that’s what I want all my waves to look

like!” Well, not really. When, we’re superimposing waves in a mix

(usually dozens of different sound sources), and more than a few of those

sources have similar-frequency harmonics, those edges you see on the last

diagram become noise rather than [good-sounding] distortion, and end up ruining

the mix.

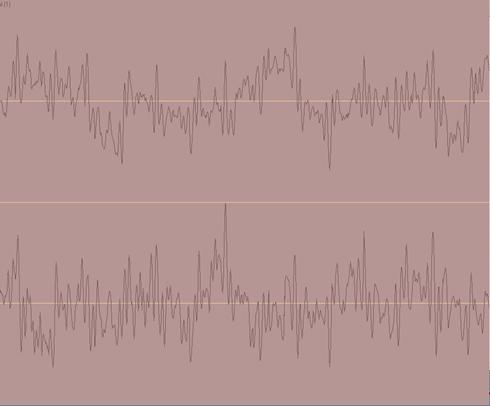

Finally,

here’s what a wave shape of an entire mix looks like. It includes all the instruments, drums,

voice, reverbs and other effects – all the fundamentals and overtones of all

those things are superimposed. Notice

there’s considerable difference between Left and Right stereo channels:

Hopefully

during recording, the sounds we captured do not overlap too much in frequency

range; in mixing, we will take care to do several other things. We know that the loudest parts of the mix

will have the biggest effect on the overall shape; therefore, all the loudest

objects should be enumerated by the frequencies they affect predominantly. The softer parts will dictate what details

are most heard – this is where we get qualities such as “presence” or “annoying

buzz” into our mix. There are three

points to keep in mind here:

- Determine which frequency range

of each instrument makes it sound best; boost that frequency slightly and

lower it in all other instruments in the mix position.

- Balance the levels of recorded

parts in different ranges (e.g., bass and rhythm guitar) so that their

superimposition does not cause them to clash, drown each other out, and

bring mud to the mix

- Ensure that the peaks of

tracks’ overall amplitude ranges are not too drastic; lower those that

stick out.

Eat that, bizzatch!